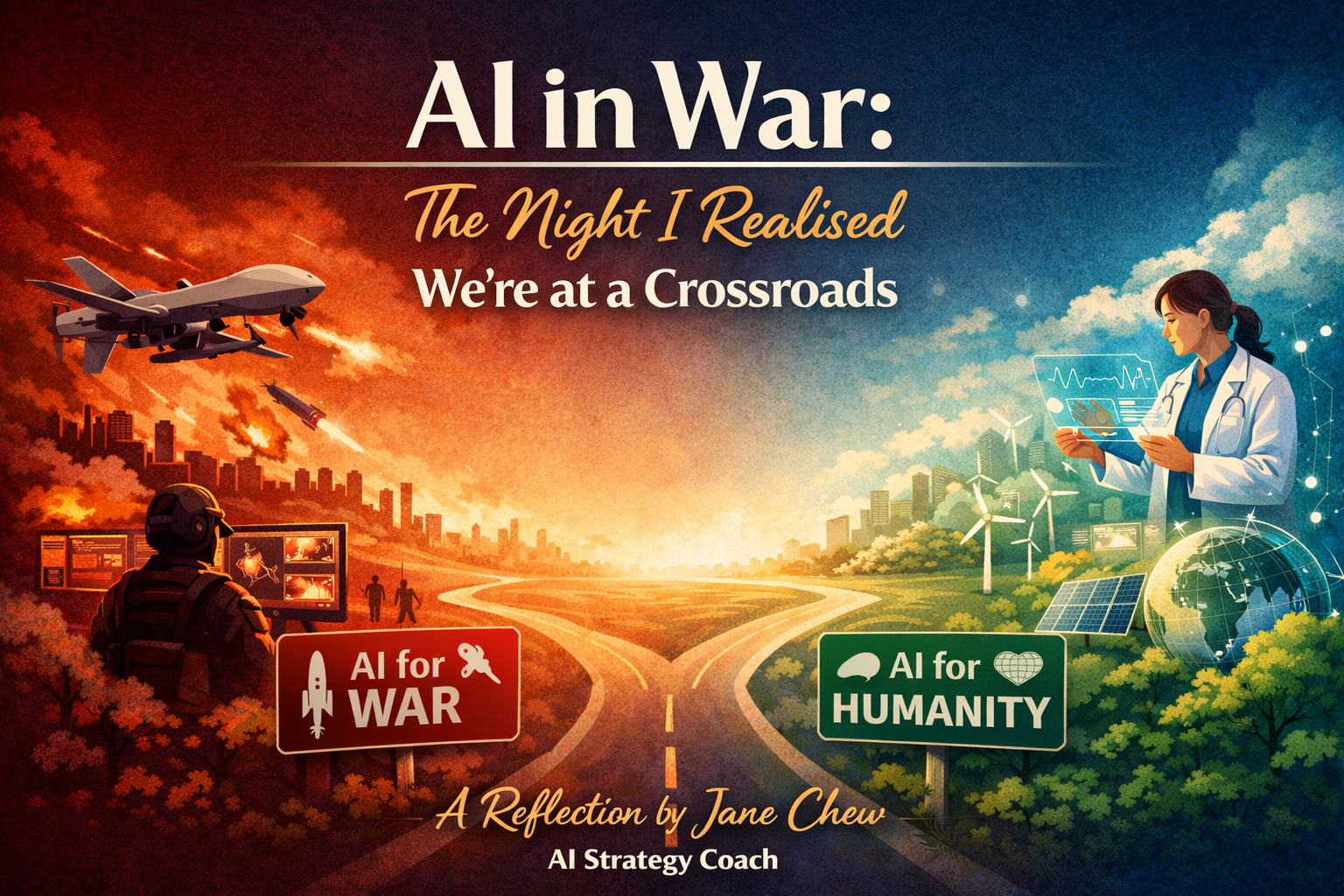

AI in War: The Night I Realised We’re at a Crossroads

A reflection by Jane Chew, AI Strategy Coach — advocating AI for humanity, not machine-speed destruction.

This post follows a reflective, story-led tone — grounded in credible reporting and humanitarian guidance.

The night that didn’t feel quiet

It was one of those ordinary evenings — the kind where your mind is still busy, but your body finally slows down. I clicked play expecting another discussion on AI trends, productivity, and “what’s next.”

But halfway through, something shifted. Not because the content was sensational — but because it landed on a truth many leaders haven’t fully absorbed yet: AI is no longer just changing business decisions. It is shaping battlefield decisions.

When technology compresses decision-making time, it also compresses reflection — and reflection is where humanity lives.

As an AI Strategy Coach, I spend most of my time helping business owners use AI for growth: better systems, stronger positioning, clearer customer strategy. But that night, I was reminded: the same engine can be pointed in different directions.

What changed: war decisions at machine speed

AI doesn’t only make decisions “smarter.” It makes them faster. And in warfare, speed changes everything.

When intelligence analysis and targeting cycles shrink from days to hours — and hours to minutes — we enter a world where human judgment can be reduced to a checkbox: approve, confirm, execute.

This is why people use terms like “machine-speed warfare.” It’s not just about technology capability. It’s about how quickly humans can still think, question, and say: stop.

Why the Iran reporting matters

Recent reporting described how AI was embedded into military workflows to help generate and prioritize targets during the U.S. campaign in Iran — accelerating operations in a way traditional analyst teams could not match.[1]

Another report highlighted how deeply integrated these AI suppliers have become inside defense software — so much so that replacing a model can disrupt major operational systems and contracts.[2]

Regardless of which model sits behind which interface, the strategic insight is clear: AI is now inside the “decision pipeline” of modern conflict.

The real risk isn’t just accuracy — it’s acceleration

When people debate AI in war, they often start with accuracy: “Is the model correct?” “Is the target identification reliable?”

But the bigger strategic risk is acceleration. AI can increase operational speed so dramatically that humans become rubber stamps — not decision-makers.

Even within the AI industry, leaders have publicly acknowledged a hard truth: once systems are deployed for defense, companies may not control how governments use them in real operations.[3]

That’s why this isn’t just a technical conversation. It’s a governance conversation. A leadership conversation. A humanity conversation.

“Meaningful human control” isn’t a slogan

International humanitarian voices have been clear: AI in conflict is not a distant future issue — it’s already here, and it demands rules that keep humanity in control.[4]

Human Rights Watch has warned that autonomous weapons and digital decision-making can create grave risks for human rights, and advocates prohibitions and regulations centered around meaningful human control.[5]

The International Committee of the Red Cross (ICRC) has also pushed for limits and regulation of autonomous weapon systems, emphasizing compliance with international humanitarian law and the need for meaningful human control.[6]

This is the heart of the issue: if we outsource moral judgment to machines, we don’t just change warfare — we change what it means to be human.

What leaders can do (yes, even in business)

Here’s the uncomfortable truth: the leadership muscles needed to govern AI in war are the same muscles needed to govern AI in business — clarity, boundaries, accountability, and values.

The “AI Power” Leadership Checklist

- Define the boundary: which decisions must never be automated (especially where harm is involved).

- Design true human-in-command: not a human clicking “approve,” but a human who understands and can challenge the recommendation.

- Audit the decision chain: what data, what incentives, what failure modes, what escalation paths.

- Assign accountability: so “the algorithm did it” is never an excuse.

- Build AI literacy: leaders must understand strategic impact, not just tool usage.

In business, we call this “governance.” In war, we call it “humanity.” But the principle is the same: power without guardrails is not progress.

Let’s use AI for humanity

AI is a multiplier of intention. It can accelerate medicine, education, climate resilience, and economic opportunity — or it can accelerate destruction.

My advocacy as an AI Strategy Coach is simple: AI must be governed by humanity, not speed. Because the future of AI won’t be decided by models. It will be decided by the values of the people who deploy them.

If you’re a leader adopting AI in your organization, start with strategy and boundaries — not tools. (That’s how we keep AI aligned with human outcomes.)

Sources

-

Washington Post — “Anthropic’s AI tool Claude central to U.S. campaign in Iran…” (4 Mar 2026).

Used for: AI-assisted target generation/prioritization; integration of Claude with Palantir’s Maven Smart System. -

Reuters — “Palantir faces challenge to remove Anthropic from Pentagon’s AI software” (4 Mar 2026).

Used for: dependency and disruption risk when AI models are embedded into defense software stacks. -

The Guardian — “Sam Altman admits OpenAI can’t control Pentagon’s use of AI” (4 Mar 2026).

Used for: limits of vendor control once systems are deployed in defense contexts. -

UNRIC (United Nations Regional Information Centre) — “AI in conflict: keeping humanity in control” (14 Oct 2025).

Used for: “AI in war is already here”; need for regulation to protect humanitarian principles. -

Human Rights Watch — “A Hazard to Human Rights: Autonomous Weapons Systems and Digital Decision-Making” (28 Apr 2025).

Used for: meaningful human control; risks to human rights; treaty recommendations. -

ICRC — “Autonomous weapons” (law & policy overview) and ICRC position on autonomous weapon systems (ongoing).

Used for: definition/concerns; meaningful human control; IHL compliance. -

NATO SHAPE — “NATO acquires AI-enabled Warfighting System” (14 Apr 2025).

Used for: broader adoption of AI-enabled warfighting systems (Maven Smart System NATO). -

UN Press — First Committee resolution coverage on lethal autonomous weapons (1 Nov 2023).

Used for: global security concerns; international discussion momentum.

Note: This article is a leadership reflection and does not endorse any military action. It focuses on governance, ethics, and the strategic implications of AI in conflict.

FAQ

Is AI already being used to support military targeting?

Reporting in early March 2026 described AI tools being used within military workflows to help generate and prioritize targets during operations in Iran. See Washington Post and Reuters coverage in the Sources section for specifics.[1][2]

What is the biggest danger of “AI in war”?

Accuracy matters — but the deeper danger is acceleration. When the decision cycle compresses to machine speed, human judgment can become superficial. That increases the risk of mistakes, escalation, and accountability gaps. This is why humanitarian groups emphasize meaningful human control.[4][5]

What does “meaningful human control” actually mean?

It means humans remain genuinely responsible for decisions to use force — with understanding, time to assess, and the ability to intervene — not merely supervising a machine that is effectively deciding. Human Rights Watch and the ICRC both argue that safeguards and regulation are needed to maintain this principle.[5][6]

Why should business leaders care about AI in war?

Because the governance challenge is the same: AI is a power multiplier. Without boundaries, accountability, and oversight, speed replaces wisdom. If leaders practice strong AI governance in business — clear limits, audits, accountability — it becomes easier to advocate for responsible AI governance everywhere.

What’s a practical first step to “AI for humanity”?

Start with a values-based AI policy and a decision boundary list: define which decisions must never be automated, how humans stay in command, and how you audit AI outputs. AI should improve human outcomes — not replace human responsibility.